Uncategorised

GPU power issue Dell 7820

The system is officially qualified by Dell for up to two high-performance, double-width graphics cards. Running three GPUs will likely require careful planning and potentially specific single-width models and dual CPUs.

Key Limitations and Considerations

- Official Support: Dell officially supports a maximum of two PCI Express x16 Gen 3 graphics cards. The total supported graphics power is up to 600W with a single CPU configuration (2 x 300W cards) or up to 320W with a dual CPU configuration (2 x 160W cards).

- Power Supply: The Dell Precision 7820 Tower typically comes with a single 950W power supply unit (PSU). Running three power-hungry GPUs may exceed the capacity or the available power connections of the standard PSU.

- Physical Space and PCIe Slots: The motherboard has a total of six PCIe Gen 3 slots (two x16 slots, and others wired as x8, x4, x1, and a legacy PCI slot). While there are enough slots, physical space is a major constraint. Most high-performance GPUs are double-width, meaning they occupy two physical slots.

- Configuration Requirements: Certain configurations, such as specific high-end 3D cards, are only qualified to be used in specific PCIe slots (e.g., slot 2 and slot 4 for the NVIDIA GeForce RTX 3080/3090).

Feasible Options

- Two High-End GPUs: This is the standard, fully supported configuration. You can install two double-width GPUs, like an NVIDIA Quadro RTX 6000 or AMD Radeon Pro WX 9100, and stay within the 600W power budget and physical constraints.

- Three (or More) Low-Power, Single-Width GPUs: It may be possible to install three or more single-width GPUs (e.g., NVIDIA Quadro P1000 or T1000) if you have the dual CPU configuration and can manage the power requirements and physical spacing.

- Modifications (Use at Your Own Risk): Some users in community forums have experimented with more than two GPUs in similar systems (like the T7920), but this often leads to technical difficulties, potential boot issues, and goes beyond official Dell support.

Conclusion

For a reliable and supported setup, the Dell Precision 7820 Tower is designed for a maximum of two GPUs. Attempting to add a third GPU, especially a high-power model, is not officially supported and will likely face power and compatibility issues.

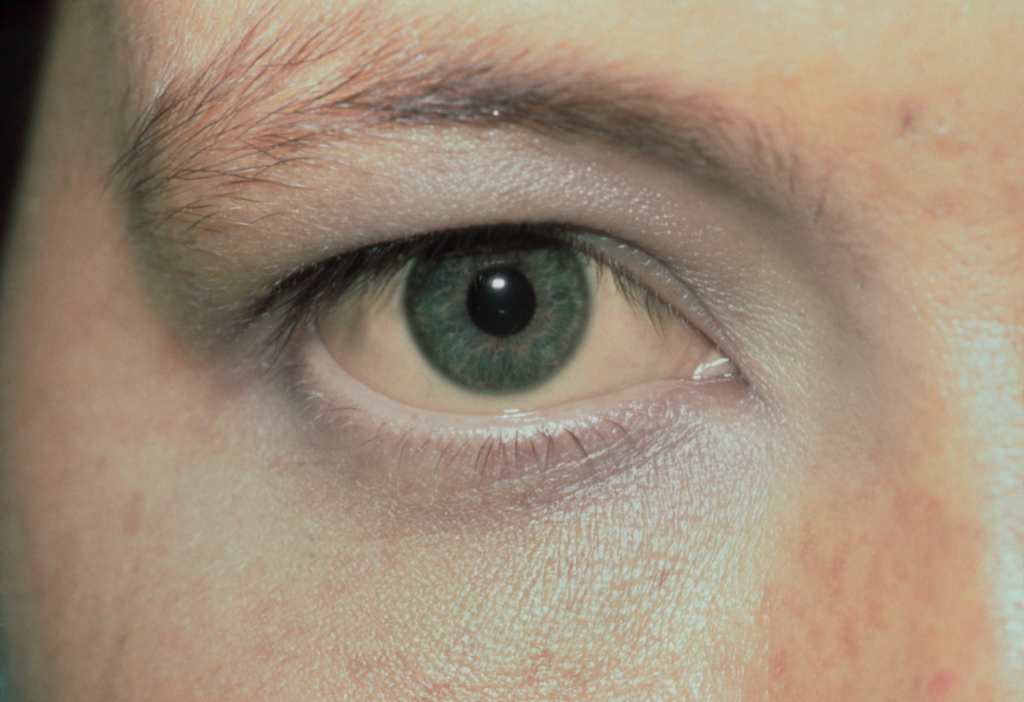

I need max 20 pages presentaion to give a presentation on modern AI with LLMs

And this is what generated by Claude Code after document skills installed, although it takes quite some time to finish:

https://laserphotonics.uk/wp-content/uploads/2025/11/Modern_AI_with_LLMs.pptx

0.929711

0.929711

0.929711

0.929711

0.929711

0.929711

Prof Zengbo Wang Profile- Sept 2025

Professor Zengbo (James ) Wang

Professor in Imaging and Laser Micromachining

Overview

Prof. Zengbo Wang received BSc and MSc degrees in physics from Xiamen University, P.R. China, and a PhD in Electrical and Computer Engineering from National University of Singapore (NUS), Singapore.

He is currently a professor at Bangor University and was a visiting professor at Northeastern University (Boston, USA, 2022-2023). He was awarded 2022 Leverhulme Trust Research Fellowship to explore cutting-edge research on AI/deep-learning assisted microsphere nanoscopy and on-demand photonic design. Before joining Bangor University in 2012, he was a lecturer at the University of Manchester between 2009 and 2012.

In 2025, Prof. Wang received the prestigious APEX Award—jointly supported by the Royal Society, British Academy, Royal Academy of Engineering, and the Leverhulme Trust —for his interdisciplinary project “Listen to the Cell Breathe”, which investigates viral infections using a hybrid nano-vibration sensing and super-resolution imaging technique, integrated through AI-enhanced fusion and analysis. It is the first APEX Award project led by a Principal Investigator based at a Welsh University since the scheme’s launch in 2017.

His research expertise lies in the fields of advanced AI and machine learning, laser-based manufacturing, nanophotonics, metamaterials, solar energy, fiber sensors and with special focuses on super-resolution microscopy, imaging, sensing, laser micro and nano processing, and AI for business and industry. He has strong publication and citation records. He is an elected senior member of Optica (2017) and is known as the leading pioneer of microsphere and nanosphere-based superlens technologies. Please visit his team website at https://laserphotonics.uk/

Additional Contact Information

Email: [email protected]

Office: Dean Street Building, Bangor University, Room 228

Tel: +44(0) 1248 382696

Fax: +44(0) 1248 362686

Web: https://laserphotonics.uk

Google Scholar: https://scholar.google.co.uk/citations?user=5MBSTZ8AAAAJ&hl=en

Research Interests

1) AI and Deep Learning in Photonics Design, Imaging, and Manufacturing

Prof. Wang’s current research is strongly focused on the integration of Artificial Intelligence (AI) into science and engineering, particularly within the field of photonics. As part of a Leverhulme Trust Research Fellowship, he leads a programme dedicated to developing AI-driven super-resolution imaging technologies. His team is actively working at the intersection of AI, optics, and advanced manufacturing to improve resolution, speed, and precision in imaging and fabrication.

They work with a wide range of AI models—including but not limited to Convolutional Neural Networks (CNN), Recurrent Neural Networks (RNN), and Generative Adversarial Networks (GAN)—to support applications such as AI-assisted photonic inverse design, super-resolution imaging for biological and nanoscale systems, and laser-based nano-patterning and process control. The team also explores the application of Large Language Models (LLMs) in scientific and engineering contexts, including the use of Retrieval-Augmented Generation (RAG) and fine-tuning for domain-specific tasks.

In addition to academic research, the group supports industry by helping businesses adopt cutting-edge AI technologies to develop new products, processes, and services.

2) Superlens and Super-resolution Microscopy

Prof. Wang and his team are internationally recognised for pioneering microsphere- and nanoparticle-based dielectric superlens technologies. Notable developments include the ‘microsphere superlens’ and ‘microsphere nanoscope’ (2011, Nature Communications), ‘spider silk superlens’ (2016, Nano Letters), and ‘nanoparticle superlens’ (2016, Science Advances). These breakthroughs have received widespread media attention, featuring in outlets such as the BBC, New York Times, Daily Mail, Independent, ABC Australia, and China Xinhua, and were highlighted in RCUK’s “50 Big Ideas for the Future.” In 2023, this body of work was featured in the seminal article “Roadmap on Label-Free Super-resolution Imaging” in Laser Photonics Reviews.

Prof. Wang was a finalist for Bangor University’s 2016 Research Excellence Award and Dissertation/Thesis Supervisor of the Year. He was also selected as a member of the 2015 Welsh Crucible cohort—a group of emerging research leaders in Wales—and previously received the Most Outstanding R&D Staff Merit Award in 2005 for his contributions to laser cleaning at DSI Singapore.

The latest nanoparticle superlens developed by his team is among the most powerful in the field, producing sharper and higher-quality images of nanoscale features, including 50 nm polystyrene nanoparticles, 45 nm gaps in semiconductor chips, and 90–100 nm adenoviruses.

3) Laser-based Manufacturing and Processing

Prof. Wang’s group conducts research into a wide spectrum of laser-based processes—including cutting, welding, drilling, texturing, marking, cleaning, and polishing—alongside emerging applications such as laser-enhanced seed germination and yield improvement. The laboratory hosts a comprehensive range of laser systems, including nanosecond fiber and UV lasers, a high-power, high-repetition-rate femtosecond laser (Jasper 30W, four wavelengths), and CO₂ lasers.

Supporting instrumentation includes advanced 3D laser scanning microscopes (Olympus OLS5000 and DSX1000) and an environmental scanning electron microscope (E-SEM) with integrated electron-beam lithography (EBL) for precise nano-manufacturing and characterisation.

This research is supported by the pan-Wales “Centre for Photonics Expertise (CPE)” programme, which collaborates with Welsh industry across sectors such as electronics, aerospace, automotive, and energy. Key partners include Qioptiq, Tata Steel, Welsh Slate, and Transcend Packaging. The team has developed a unique capability in direct laser nano-marking using specially engineered superlenses, achieving sub-100 nm resolution.

4) Fiber-based Optical and Quantum Sensing

The team also explores cutting-edge sensing technologies, including:

- Novel surface-functionalised FBG/LPG sensors

- Ultrasensitive cell vibration detection at the picometer scale

- Optical fiber-based trapping, imaging, and sensing

- Quantum sensing platforms

These capabilities support fundamental research as well as emerging biomedical and environmental applications.

5) Shift-free Anti-laser Metamaterial Filters

Prof. Wang’s group is among the few research teams to have successfully developed metamaterial-based, wide-angle, shift-free narrowband filters designed for anti-laser-strike protection. Ongoing work extends this innovation to underwater optical communication systems.

6) Solar Cell Research

Research efforts also extend to advanced photonic and laser technologies for high-efficiency solar cells, including:

- Novel laser-trapped nanostructures and metasurfaces for light management

- Solar-driven nanoparticle synthesis and water splitting

- Applications of nanophotonics and plasmonics in perovskite solar cells

- Precision laser manufacturing techniques to improve solar cell performance

Postgraduate Project Opportunities

I welcome PhD candidates to join my team to work in the following areas: (1) AI/Deep Learning for photonics and imaging.(2) Laser nano-manufacturing.(3) Optical Neural Networks.(4) Nanophotonics and metasurfaces.(5) Super-resolution science and technology.(6) Optical fiber sensors.(7) Renewable energy (Solar, etc.).

Projects

- Listen to the Cell Breathe: Investigating Viral Infection in real-time with Nano-Vibration Detection and Super-Resolution Imaging01/10/2025 – 15/10/2027 (Not started)

- Advanced Thermal Management for Space Electronics (ATMS)01/07/2025 – 15/01/2027 (Active)

- Feasibility Study and Preparation for Horizon Europe Bid: Laser-Assisted Carbon Nano-Onion Cancer Treatment and Monitoring01/07/2025 – 15/07/2026 (Active)

- Enhancing Research Capacity in North Wales with Environmental SEM and Integrated EBL01/01/2023 – 30/09/2024 (Finished)

- Deep learning assisted microsphere nanoscopy and on-demand photonic design27/07/2022 – 05/11/2024 (Finished)

- KESS II MRes with Lightfuture Ltd- BUK221601/02/2021 – 31/03/2024 (Finished)

- A novel bionanoscopy platform based on integration of optical superlens array and scanning probe microscopy01/12/2020 – 31/03/2025 (Finished)

- 80761 BU262 Development of robust large-area manufacturing process for optical metamaterial applications01/08/2020 – 30/10/2023 (Finished)

- Advancing advanced manufacturing and photonics research in Wales with cutting edge 3D measuring laser confocal microscope01/08/2019 – 01/08/2022 (Finished)

- Nanoparticle-based superlens and optical nanoscope20/03/2019 – 01/08/2022 (Finished)

- 81400 The Centre for Photonics Expertise (CPE)01/08/2018 – 31/07/2024 (Finished)

- 81400 The Centre for Photonics Expertise (CPE)01/08/2018 – 31/12/2021 (Finished)

- Combat Counterfeiting with Invisible Photonic Printing (CCIPP)01/01/2018 – 30/09/2020 (Finished)

- KESS II PhD project with Qioptiq Ltd BUK28901/10/2016 – 01/08/2022 (Finished)

- Scanning microsphere nanoscopy for live cell/virus imaging01/12/2015 – 21/03/2017 (Finished)

- 3D Printing of Functional Photonic Metamaterial Devices01/01/2015 – 30/06/2018 (Finished)

- On-chip Microfludic Nanoscope for Super-resolution Imaging and Analysis01/10/2014 – 20/01/2018 (Finished)

add chome mcp server to claude code

linux:

claude mcp add chrome-mcp ‘{

“type”: “http”,

“url”: “http://127.0.0.1:12306/mcp“

}’

On windows:

claude mcp add chrome-mcp “{\”type\”:\”http\”,\”url\”:\”http://127.0.0.1:<PORT>/mcp\”}”

Listen to the cell breathe-logo

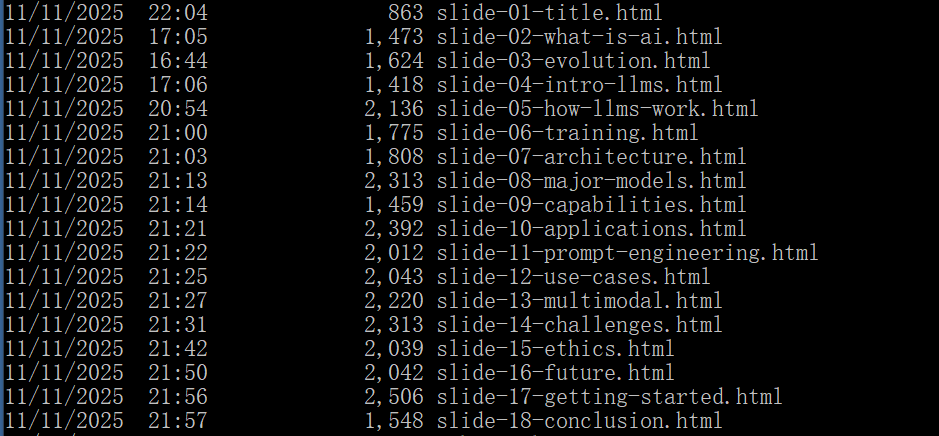

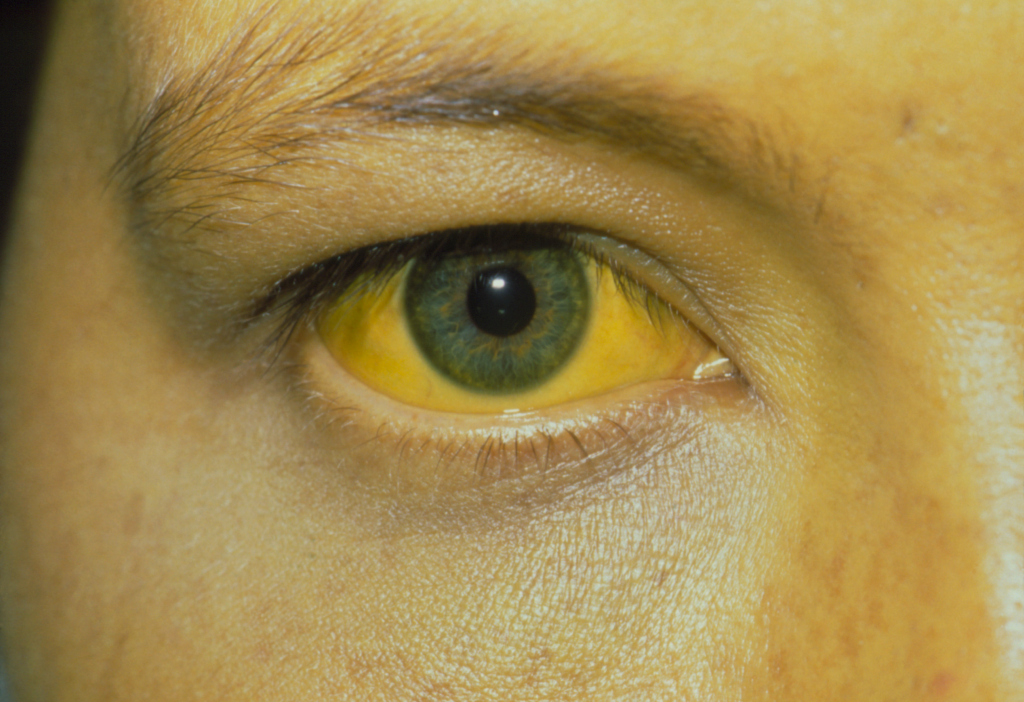

Computer filter on CDC jaundice image

how to create ssh socks proxy

ssh -D 0.0.0.0:$SOCKS_PORT -q -C -N $USER@$SERVER

python 3.10+pytorch on windows server miniconda

Python 3.10 pytorch sucessful installation on windows 2019 server the ‘ViT’ conda enviroment on December 21 2024, note using teamviewer into server to installation, Desktopanywhere causes SSL error:

Step 1: conda create –name ViT python=3.10

Step 2: pip install torch==1.11.0+cu113 torchvision==0.12.0+cu113 torchaudio -f https://download.pytorch.org/whl/torch_stable.html (this requires python3.10)

Step 3: pip install “numpy<2.0”